They Trained on OUR Internet

Anthropic's training data included the Philippines. Anthropic's access policy excludes the Philippines. Every other major AI platform I depend on follows the same pattern with different paint.

I run AI-powered content operations from the Philippines using Anthropic, OpenAI, GCP, AWS, Fly.io, and more. Some of those tools routes through a VPN that I open before my CLI.

I’m writing this from inside the supply chain.

I’ve been studying AI ethics at LSE this month, working through market failures, monopolistic concentration, and the gap between countries that get to write the rules and countries that absorb them. Three weeks into the coursework, I tried to use Claude Design after it was announced to be on research preview from Manila on a paid Max subscription, and today the connection failed at the ISP level. I opened my VPN, then my CLI, then went back to studying the failure mode I had just experienced firsthand.

The block

From my PLDT and Globe residential connections, requests to Anthropic’s endpoints fail unless I route through a VPN, while the same account works from a VPN endpoint in a supported country. Equal opportunity much?

Filipino developers are sharing the same workaround on Reddit. Whatever enforces the block sits below account level and keys off geography, not whether I’m a paying user. My paid Max subscription is invisible to it because the rule targets routes, and account status falls outside its scope.

Outages happen when systems fail. What I’m describing is the system performing exactly as configured by someone who decided my country sits outside the supported list.

When was the last time your AI tools required a VPN to reach from your own country? When was the last time you paid for a tier that your geography invalidated?

The training data

Anthropic, OpenAI, and Google trained on the global internet, which is the whole pitch. They built models that understand the world because they read the world, and that reading included content produced by Filipino journalists, designers, developers, marketers, bloggers, forum posters, and small business owners, with all of those contributors absent from the compensation list, the credit list, and the consent list.

Read this as supply chain analysis. Filipino digital labor sits in the model weights, while the same digital labor force needs a VPN to query the model that contains it.

If you’re inside an LLM company reading this, I have three questions for you. What percentage of your training corpus came from regions where your product now requires a VPN to access? Has anyone on your team calculated that ratio? Would you publish it if they had?

The access ladder

Google has sharply tightened the free API experimentation tier in AI Studio, pushing Pro models behind paid plans and shrinking the free quota to the point where serious prototyping now costs money.

Google for Startups cloud credits still exist, technically, with an application process that requires investor documentation, accelerator affiliation, and ecosystem proximity calibrated for San Francisco.

I have pitched DashoContent at AppWorks Demo Day in Taipei, at e27 Echelon in Singapore, at She Loves Tech where we placed third in the Philippines, at the Training of Trainers in Seoul. Sixteen years of building from the Philippines put me in those rooms. The credits program criteria still place me outside the eligibility window.

How many Filipino founders have you seen at YC demo day? At Sequoia’s portfolio events? Now compare that to how much of the training data came from Asia. The asymmetry has structure. The access ladder reflects the funding ladder, the funding ladder reflects the geography ladder, and all three ladders point the same direction.

Who is the gatekeeper deciding which markets earn free tiers? When that decision gets made, is anyone in the room from the markets being decided about?

What it actually costs

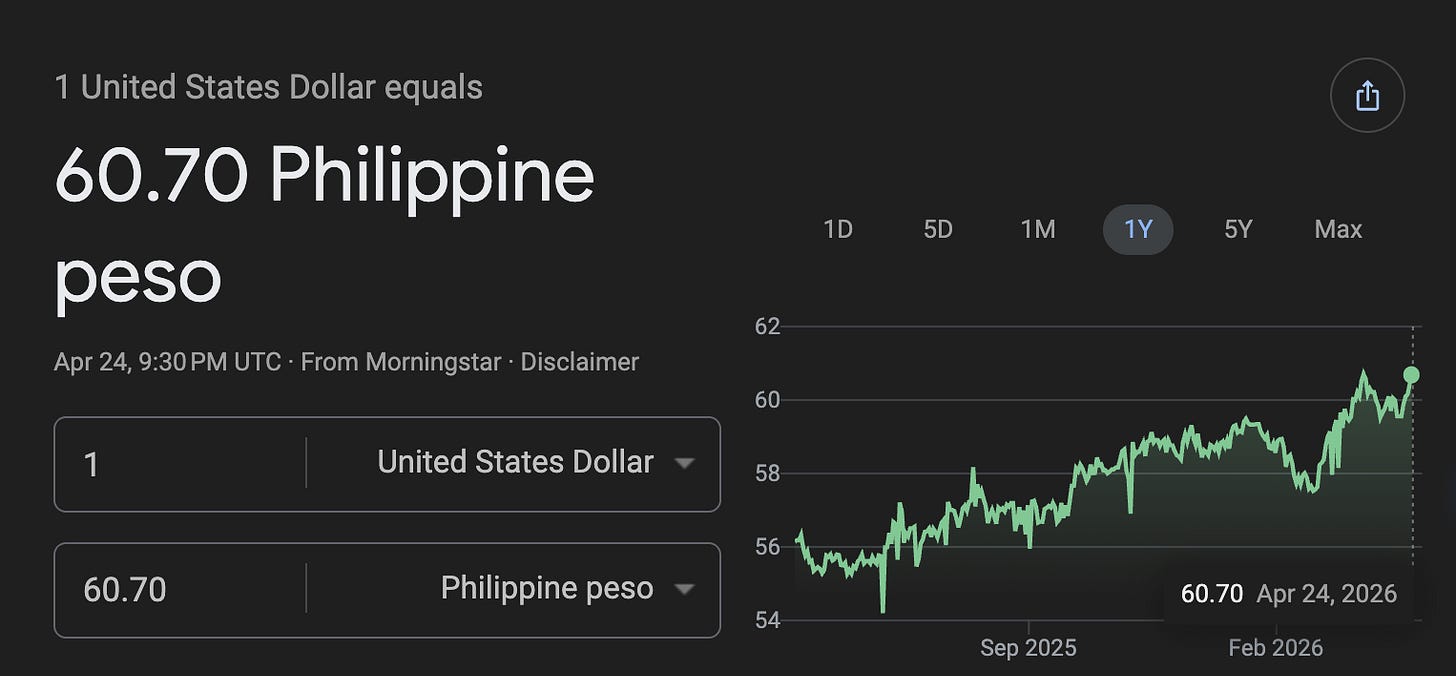

I am building DashoContent and Third Team Ventures right now, running on Anthropic, OpenAI, GCP, AWS, Fly.io and more. Every line item is denominated in USD. When the peso slips against the dollar, I lose margin the same week.

When OpenAI throttled our account during the dasho.ai launch, an AI brand voice analyzer I had built and shipped on a Wednesday after weeks of promotion, I sat watching my own product flicker offline within an hour while waiting for the credit limit to reset. When Google pulled the free experimentation tier, three n8n prototypes I had running for clients stopped being free overnight. The notice for every one of those decisions was calibrated for English-speaking enterprise markets, which puts my market outside the announcement audience by default.

Who absorbs the cost when the platform changes its pricing or access policy? The cost lands on the founders downstream of the decision, every time.

The ethical frame

Thomas Ferretti, the LSE professor teaching my course, puts it cleanly. Profit-seeking is only ethically justified insofar as it contributes to economic efficiency, and the moment it exploits market failures, restricts access, externalises costs, or compounds monopoly advantage, it loses that justification.

By that standard, blocking AI tool access in developing markets while continuing to train on globally produced content fails the test. It fails under the same ethical framework these companies cite when they publish their responsible AI principles, sign their AI safety pledges, and host their AI for Good summits.

Why does the responsible AI conversation skip responsible distribution every time? Why is “AI for humanity” the marketing line and “AI for paying markets we already understand” the operating policy?

The question I am leaving here

I use the AI tool stack I have daily because the market currently offers no comparable alternative for what I’m building, and that dependence is the structural condition under which I work. It’s worth saying plainly that I am writing about this with my account active and my subscription paid.

The founders most exposed to this condition are building real products for real local problems with real local users, in markets that the platform owners have classified as low priority, hard to underwrite, or blocked outright. The AI access conversation tracks who gets displaced by automation. It mostly skips who gets to build, in which countries, under what cost structure, with which tools, on whose terms.

Here are the questions I want answered.

If you’re building from the Global South, what does your stack actually cost you in workarounds? In dollars? In hours per week of friction the announcements skip?

If you’re inside Anthropic, Google, or OpenAI reading this, would you ship a product knowing the population you trained on requires a circumvention tool to reach it from their own country? Has that conversation happened in your org? Did anyone block ship on it?

If you’re a policymaker in any ASEAN country, are you negotiating access terms with the platforms, or assuming the market will sort it out? Has anyone modelled what it costs your founder ecosystem when the market fails to sort it out?

What I have is a Substack, a VPN, and sixteen years of building from a country the platforms I depend on treat as out of scope.

I am asking these questions every time I open the VPN or asked to upgrade again from my current plans.

If you’re building from a place outside the launch map, I want to hear from you in the comments.